Spacetime Texture Dataset

Konstantinos G. Derpanis and

Richard P. Wildes

Contact email kosta at cse dot yorku dot ca

Department of Computer Science and Engineering

and Centre for Vision Research

York University, Toronto, ON, Canada

Overview

This webpage provides a spacetime texture database.

The database contains a total of 610 spacetime texture samples. The videos were obtained

from various sources, including a Canon HF10

camcorder and the BBC Motion Gallery; the videos

vary widely in their resolution, temporal extents and

capture frame rates. Owing to the diversity within

and across the video sources, the videos generally

contain significant differences in scene appearance,

scale, illumination conditions and camera viewpoint.

Also, for the stochastic patterns, variations in the

underlying physical processes ensure large intra-class

variations.

For details of our technical approach to spacetime structure grouping, see our project page.

Database Organization

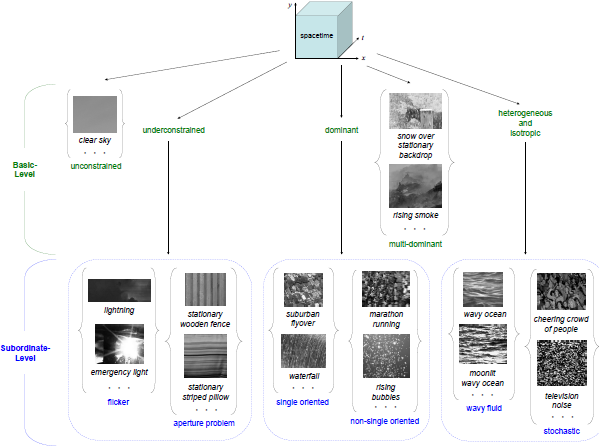

The figure below illustrates the overall organization of the York University Vision Lab (YUVL) spacetime texture database.

The data set is partitioned in two ways: (i) basiclevel

and (ii) subordinate-level, by analogy to terminology

for capturing the hierarchical nature of human

categorical perception [3]. The basic-level partition, is based on the

number of spacetime orientations present in a given

pattern; for a detailed description of these categories,

see Section 2.1 of [2]. For the multi-dominant category, samples

were limited to two superimposed structures

(e.g., rain over a stationary background). Note, the

basic-level partition is not arbitrary, it follows from the

systematic enumeration of dynamic patterns based

on their spacetime oriented structure presented in

Sec. 2.1 of [2]. Furthermore, each of these categories have

been central to research regarding the representation

of image-related dynamics, typically considered on a

case-by-case basis. To evaluate the ability

to make finer categorical distinctions, several of the

basic-level categories were further partitioned into

subordinate-levels. This partition

is based on the particular spacetime

orientations present in a given pattern. Beyond

the basic-level categorization of an unconstrained oriented

pattern (i.e., unstructured), no further subdivision

based on pattern dynamics is possible. Underconstrained

cases arise naturally as the aperture

problem and pure temporal variation (i.e., flicker).

Dominant oriented patterns can be distinguished by

whether there is a single orientation that describes

a pattern (e.g., motion) or a narrow range of spacetime

orientations distributed about a given orientation. Note

that further distinctions might be made based, for

example, on the velocity of motion (e.g., stationary

vs. rightward motion vs. leftward motion). In the

case of multi-dominant oriented patterns, the initial

choice of restricting patterns to two components to

populate the database precludes further meaningful

parsing. The heterogeneous and isotropic basic category

was partitioned into wavy fluid and those more

generally stochastic in nature. Further parsing within

the heterogeneous and isotropic basic-level category

is possible; however,

since this more granular partition

is considered in the UCLA data set [4], in the YUVL data

set only a two-way subdivision of the heterogeneous

and isotropic basic-level category is considered.

Download

Spacetime Texture Database (2.6GB download)

Related Papers

[1] K.G. Derpanis and R.P. Wildes, Dynamic Texture Recognition based on Distributions of Spacetime Oriented Structure, In Proceedings of the IEEE Conference Computer Vision and Pattern Recognition (CVPR), 2010.

[2] K.G. Derpanis and R.P. Wildes, Spacetime Texture Representation and Recognition Based on a Spatiotemporal Orientation Analysis, IEEE Transactions on Pattern Analysis and Machine Intelligence (PAMI), to appear.

[3] E. Rosch and C. Mervis, Family resemblances: Studies in the internal structure of categories. Cognitive Psychology, vol. 7, pp. 573-605, 1975.

[4] P. Saisan, G. Doretto, Y. Wu, and S. Soatto, Dynamic texture recognition, in CVPR, 2001, pp. II:58-63.

Last updated: October 6, 2011.

|